During his Cloud Next Conference this week, Google unveiled the latest generation of his TPU AI Acillar Chip.

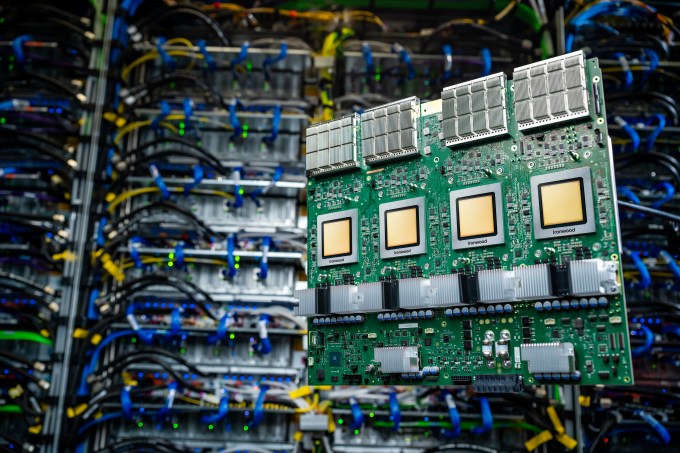

The new chip, known as the Ironwood, is Google’s seventh generation TPU and it is the first to run the AI models. Google Cloud Customers scheduled to launch late this year, Ironwood will be in two configuration: a 256-chip cluster and a 9,216-chip cluster.

Google Cloud VP Amin wrote in a blog post provided to TechCrunch, “still our most powerful, capable and power-tPU,” “” and it is thoughtful to get the thought of power, to the scale AII models “”

Ironwood AI Acillar has reached as a competition in space. Nvidia may have leadership but tech giants, including Amazon and Microsoft, are pressing their own internal solutions. Amazon’s trainium, infernantia and graviton processors are available via AWS and Microsoft hoses ajur examples for its cobalt 100 AI chip.

According to Google’s internal benchmarking, ironwood 4,614 tflops can provide TFLPS as a peak. Each chip has a 192GB dedicated RAM with which bandwidth has reached 7.4 TBPS.

Ironwood has an extended special core, sporsekore for processing types of “advanced rankings” and “recommendation” work stress (such as an algorithm that may suggest your choice). The TPU architecture was designed to reduce the data movement and lactation on-chip, which causes electricity to save, Google says.

Google plans to integrate ironwood with AI Hypercomputter’s AI Hypercomputter in Google Cloud in the near future.

“Ironwood represents a unique progress in the age of hypothesis,” Bahadat said, “with the power of extended count, with the power of memory, […] Networking progress and reliability. “