Like every major technology company nowadays, Meta has its own flagship generator AI model known as Lama. The Lama is somewhat unique in the big models that it is “exposed”, which means developers can download it and use it, but they can please (with certain limitations). It is contrary to models like anthropic clod, Google’s Gemi, Jy’s Grock and most chatzPT models like Openai, which can only be accessed through API.

In the interest of giving developers, however, the Meta Lama has also parted with vendors including AWS, Google Cloud and Microsoft Azur to make the Meta Lamar cloud-hosted versions available. Also, the company publishes equipment, libraries and recipes in its Lama Cookbook to help developers to help them delicate, evaluate and adapt to their domain. With the new generation Lama 3 and Lama 4, these capabilities have expanded to include domestic multimodal support and broad cloud rollouts.

Here is what you need to know about the Meta Lama, from its ability and versions you can use where it is. We will keep this post updated because the meta release publishes upgrades and introduce the new Dev tools to support the use of the model.

What is the Lama?

Lama is a family of models – not just one. The latest version is Lama 4; It was published in April 2025 and it contains three models:

- Scout: 17 billion active parameters, 109 billion total parameters and a context window of 10 million token.

- Mavarick: 17 billion active parameters, total parameters of 400 billion and a context window of 1 million token.

- Uninterrupted: Not yet published but there will be 288 billion active parameters and 2 trillion total parameters.

(In Data Science, tokens are the sub -divided bits of raw information, such as “fantastic” on the word syllables “fan,” “TAS” and “tick”.

Context of a model, or context window, input data (eg, text) which is considered before producing the model output (eg, additional text). In the long context, models can “forget” the recent dox and data content and to prevent the subject from removing and incorrectly extrapoot. However, the model may be more prone to “forgotten” and conversations that are more inclined to create a specific protection guards as a result of prolonged context windows, which leads to some users ConfusingThe

For references, the 10 million context window that promises the Lama 4 scout is equal to about 80 average novel text. The 1 million context window of the Lama 4 Mavarick is equal to eight novels.

TechCrunch event

San Francisco

|

October 27-29, 2025

According to the meta, all the Lamas 4 models were trained in “wide visual understanding” as well as in 200 languages ”abundant text, image and video data”.

Lama 4 Scouts and Maverrick are Meta’s first Open-Open Multimodal Model locally. They are built using a “mixed expert” (MOE) architecture, which reduces calculation load and improves training and assumption skills. For example, the scout has 16 experts and Mavarick has 128 experts.

The Lama 4 Behemoth includes 16 experts in the Behemoth and Meta referred to it as a teacher of small models.

The Lama is made in 4 Lama 3 series, it includes 3.1 and 3.2 models used for the deployment of instructed-protected applications and clouds.

What can the llama do?

Lama, like other generators AI models, can also perform a variety of helpful tasks, such as coding and answering basic mathematics, as well as at least 12 languages (Arabic, German, French, Hindi, Indonesian, Indonesian, Portuguese, Hindi, Spanish, Taglog, Taglog, Thai, and Vietnamese). Most of the text-based work stress-PDFS and spreads like spreadsheets are analyzed by the idea that all the Lama 4 model supports the text, image and video input.

Lama 4 Scouts are designed for long work flow and huge data analysis. Mavarick is a generalist model that is better to balance the strength and reaction speed and suitable for coding, chatboats and technical assistants. And Behemoth is designed for advanced research, models and stem functions.

Lama models with Lama 4.1 can be configured to perform third -party applications, equipment and APIs. They are trained to use bold search to answer questions about recent events; Wolfram alpha API for mathematics and science related questions; And a Python interpreter to validate the code. However, these tools require the right configuration and are not able to automatically outside the box.

Where can I use the Lama?

If you are just trying to chat with Lama, it is gaining experience in the Meta AI chatboat in Facebook Messenger, WhatsApp, Instagram, Okulus and Meta in 40 countries. Lama’s subtle tunnel versions are used in more than 200 countries and regions in the meta AI experience.

Lama 4 Model Scouts and Mavarick AI Developer Platforms are available to Lama.com and Meta Partners with hug face. Behemoth is still in training. Building developers with Lamama can download, use or subtle the model across most popular cloud platforms. Meta has claimed that Lama has more than 25 partners in hosting, including Nvidia, Databrix, Grock, Dell and Snowflake. And Materi’s publicly available models “Access Sales” is not a meta business model, but the company raised some money through the agreement to share revenue with model hosts.

Some of these partners have created additional equipment and services at the top of the lama, with equipment that allow models to refer to owned data and enable them to run in low delays.

Importantly, the license of the Lama The developers limit how the model can be placed: App developers with more than 700 million monthly users must require a special license from the meta that the company will allow the company to be considered.

In May 2025, Meta launched a new program to encourage startups to take its Lama models. For startups, Lama gives companies aid from the Meta Lama team and access to potential funds.

In addition to Lama, the meta provides the tools created for “safe” the model:

- Lama guardA restraint structure.

- CybersevalA cyberssiology risk-assessment suite.

- Lama firewallBuilding Protected AI systems are a protective guardian designed to enable.

- Shield of the codeWhich provides assistance for filtering the estimation of the unsafe code produced by LLMS.

The Lama Guard tries to detect-or-generated issues of fed by a Lama model with contents related to criminal activity, child exploitation, copyright violation, hatred, self-loss and sexual abuse.

It was said that this is definitely not a silver bullet since Meta’s own previous guidelines allowed chattbott to be involved in passion and romantic chat with minors and some reports that they were transformed Sexual conversationThe Developers can Customized Apply blocks in all languages in all languages.

Lama, like the Lama Guard, can block the text intended for Lama like a prompt guard but only the text to “attack” the model and get it to treat it in an unwanted way. Meta claims that the Lama Guard can clearly protect against contaminated prompts (eg, the jailbreaks that try to get around the lama built -in protection filters) in addition to prompts “”Input injectionThe “Lama Firewall works to detect and prevent risk such as instant injection, unsafe code and risky equipment interaction and code shield and provides secured command executions for seven programming languages.

As a cyberssval, it is less tools than the collection of criteria for measuring model protection. A Lama model of Cyberseval can evaluate a lamar model for applicants and last users in fields such as “Automated Social Engineering” and “Scaling offensive Cyber Operations” (at least according to the metter criteria).

Lame

The Lama brings some risks and limitations like the AI model of the Lama. For example, though its recent model has multimodal properties, they are mainly limited to the English language.

Zoom-out, Meta used a pirated e-book and a dataset of articles to train its llama models. A federal judge recently supported Meta in the copyright case against the company by the author of the six books, ruled that the use of copyrighted work for training was “fair use”. However, if the llama reconstructs a copyrighted snippet and someone uses it in a product, they can probably violate the copyright and be responsible.

Meta controversially trains Instagram and Facebook posts, photos and captions and its AI and Makes users hard to opt out on behalf ofThe

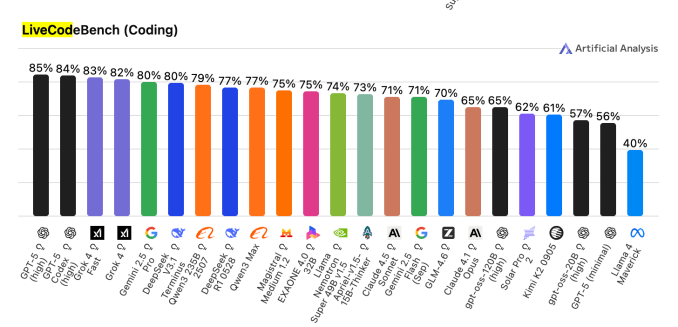

Programming is another field where it is wise to walk lightly when using the Lama. Because the Lama may – maybe its generator is more than AI parts – Produce the buggy or insecure codeThe Opened LivecodbenchA benchmark that examines AI models on competitive coding problems, Mater Lama 4 Mavarick Model has earned 40%score. This is compared to 85% for the Openai GPT -5 high and 83% for Jei Grock 4 quickly.

As usual, it is best to review an AI-exposed code before incorporating it into a service or software.

Finally, like other AI models, Lama models are still admirable-sounding but guilty of producing false or misleading information, whether they are coding, legal guidance or sensitive conversation with AI Personas.

It was originally published on September 8, 2024 and it was updated regularly with new information.